Future-fit reporting requires AI enablement, not just AI tools

Artificial intelligence is rapidly reshaping corporate reporting. The technological capabilities are impressive, and the productivity potential is undeniable. Yet the real differentiator for future-fit organizations will not be how quickly they adopt AI tools, but how deliberately they become AI-enabled.

By Christoph Magnussen

AI enablement means more than training employees to use a system interface. It refers to a structured, organization-wide capability: understanding how AI systems function, where their limitations lie, how outputs must be validated and how governance frameworks must evolve as autonomy increases. Without this depth of understanding, AI integration risks becoming superficial – and in capital-market contexts, potentially hazardous, as credibility is the main currency.

From tool usage to understanding

Reporting teams are experimenting with generative AI to draft management commentary, summarize risk exposures, compare disclosures across periods and synthesize large volumes of information. But these experiments alone do not constitute capability.

Large language models, for instance, generate output by predicting likely sequences of text based on statistical patterns. They produce coherent, fluent language, but fluency can mask analytical gaps. A well-structured paragraph does not guarantee conceptual accuracy. A convincing explanation does not equate to material relevance. Thus, corporate reporting should never conflate efficiency with reliability.

When applied thoughtfully, AI enhances reporting workflows in meaningful ways. It allows reporting teams to iterate more rapidly and explore broader perspectives. However, the final evaluation of relevance, balance and disclosure boundaries remains a human task. The reporting professional remains in the lead, defining objectives, framing context, interpreting results and validating outputs. The same principle applies when it comes to recipients using AI tools and models to work through financial and corporate reports. Also, one should definitely consider writing reports in a way that AI and humans can process.

Governance as strategic imperative: from generative AI to agentic systems

The technological landscape is already evolving further. Beyond generative AI, organizations are beginning to deploy agentic AI systems: more and more autonomous tools capable of planning, retrieving information from multiple data sources, interacting with software environments and executing multi-step workflows with limited supervision.

In reporting, this represents a structural shift. A generative system might draft a disclosure based on provided data. An agentic system could autonomously retrieve financial figures from ERP systems, reconcile variances across the data sets of several business units, generate explanatory narratives and prepare draft adjustments for review.

As autonomy increases, so does governance complexity. Questions of access control, traceability, validation and escalation become more acute. Who defines operational boundaries? How are automated data retrieval processes documented? At what point must human oversight intervene? How are errors detected and attributed?

Organizations must explicitly define what AI systems are authorized to access, initiate or modify within reporting workflows. The more capable the technology becomes, the greater the need for disciplined oversight. Introducing AI into corporate reporting without strengthening literacy and governance among humans does not simply create operational risk; it creates reputational exposure.

AI enablement as a continuous capability

Leadership must therefore establish clear principles. Validation standards, documentation requirements, transparency expectations and accountability structures must be defined before AI-supported processes scale. When, on the contrary, AI is perceived merely as a productivity shortcut, informal practices proliferate. Future-fit organizations recognize that AI enablement is part of governance. It strengthens internal controls, supports audit readiness and protects stakeholder trust. Rather than asking “Can AI do this?”, future-fit organizations ask: “What is sensible, responsible and value-creating for AI to do in this context?”

Technology will continue to evolve, models will become more sophisticated and agentic systems will gain broader operational scope. None of these developments diminish the need for understanding. On the contrary, increasing capability heightens the importance of human literacy. AI enablement must therefore be ongoing, an everyday practice.

Key Takeaways

AI tools do not automatically improve reporting – literacy and AI enablement does.

Generative and agentic AI boost corporate reporting, but humans remain accountable.

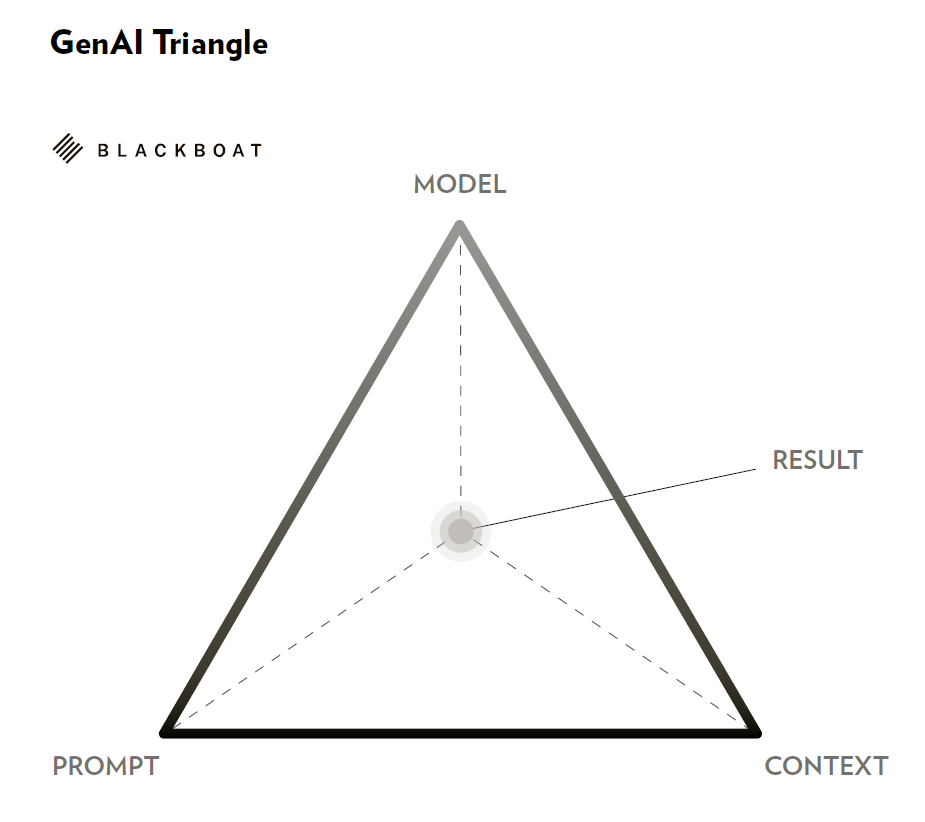

The GenAI Triangle (model – prompt – context) determines output quality.

Leadership must define governance frameworks for AI usage in reporting.

Continuous AI enablement is a strategic capability, not a one-time initiative.

The GenAI Triangle: Model. Prompt. Context

In practice, organizations often attribute varying output quality to differences in AI models. Experience suggests otherwise. The decisive factors usually lie in how systems are instructed and contextualized. To clarify this dynamic and as the starting point for AI enablement, the concept of the GenAI Triangle comes into play, which consists of three interdependent elements: the model, the prompt, and the context.

The model provides technical capability. The prompt defines the task and desired structure. The context supplies background information, constraints, intended audience and strategic framing. Only when these elements align does output become robust and decision-relevant.

In reporting environments, contextual precision is particularly influential. Supplying structured financial data, clarifying material themes and defining stakeholder perspective significantly improve AI-assisted drafts. Changing the underlying model often yields marginal improvement compared to refining prompt quality and contextual depth. The GenAI Triangle illustrates a broader principle: sustainable value from AI depends on human understanding of how to work with the system. Thus, AI enablement is key.

Christoph Magnussen

is a podcaster and creator, where he is explaining AI and the future of work on "AI to the DNA", "On The Way To New Work" and on YouTube. By profession, he is CEO of Blackboat, a consulting firm which provides organizations with holistic advice on implementing technological and cultural solutions to strengthen collaboration.